Introducing Pyxie - A Little Python to C++ Compiler

August 05, 2015 at 09:14 PM | categories: python, subset, compilation, C++, microcontrollers | View CommentsOver the past several months during evenings and weekends I started work on a new python to C++ compiler -- called Pyxie (it's a play on words :). It's currently reached version 0.0.16, and is just beginning to be usable for vaguely fun things.

It's not intended to be a full implementation of python, but rather a "Little Python" that is simple enough to be compiled to C++. "Little Python" will be a well defined subset of python that can be compiled. The reason for this is to allow me to write code in python that compiles to run on embedded systems like Arduino, MSP430 and ARM mbed platforms.

This makes it different from Micropython - which runs a python interpreter on the microcontroller, and PyMite which effectively runs a simplified python VM. Both of those projects assume a device with more memory. Due to the constraints of these devices, the compiler is unlikely to ever support the entirety of the python language.

PLEASE NOTE It is not complete, and almost certainly won't do what you want, yet. If you're looking for a general python to C++ compiler - though not for arduino - take a look at ShedSkin. It is just beginning to be useful though for fun little things with an Arduino (or similar devices based on an Atmel Atmega 32U4), hence this post.

What job does it do?

Pyxie's job is to allow me to write python code, instead of C++ such that it runs on a microcontroller, and to do so efficiently. The reason I want this is to (eventually) use this with cubs and scouts.

- I quite like the idea of describing software in terms of the job it does. Since I came across the approach, I find it clarifies the purpose of a project dramatically.

Website

Pyxie has a site: http://www.sparkslabs.com/pyxie/

It's a work in progress and will become nicer and shinier as Pyxie itself gets nicer and shinier.

Release Schedule

There's no particular release schedule. I try not to have releases that are too large, or too far apart. What's planned to go into a release is done on a release by release basis. What actually has gone in is a reflection of what I've been up to in the evenings/weekends of any given week.

Name

The name is a play on words. Specifically, Python to C++ - can be py2cc or pycc. If you try pronouncing "pycc" it can be "pic", "py cc" or pyc-c". The final one leads to Pixie.

Target Functionality

The guiding principle is that Little Python should look and act like a version of python that was never written. (After all python 1.x, 2.0, 2.5, 3.x, 3.5 are all "python" but simply different iterations) You could almost say that Little Python should look like a simplified "ret-con" version of python.

At present a non-exhaustive high level list of things that are targetted are:

- Duck typing / lack of type declarations (but strong types)

- Whitespace for indentation

- Standard control structures (No else clauses on while/for :) )

- Standard built in types

- Lists and dictionaries

- Easy import/use of external functionality

- User functions (and associated scoping rules)

- Objects, Classes, Introspection, and limited __getattr__ / __setattr__

- Namespaces

- Exceptions

- PEP 255 style generators (ie original and simplest)

Quite a few of these may well be challenging in teeny tiny microcontroller environment, but they're worth targetting.

Status

So what DOES this do at the moment?

At present it supports a very simple subset of python:

- strings*, ints, boo leans, floats

- Variables - and typing via basic type inference

- while, for, if/elif/else

- Parenthised expressions

- Full expression support for ints

- For loops actually implement an iterator protocol under the hood

- The ability to pull in #include's using C++ preprocessor style directives - since they're automatically python comments

It also has 2 compilation profiles:

- Desktop/libc - that is for development and testing

- Arduino Leonardo - that includes things like DF Beetle/etc

This is a simple benchmark for a desktop:

countdown = 2147483647

print "COUNTING DOWN"

while countdown:

countdown = countdown - 1

print "BLASTOFF"

(That "print" will become a python3 style print BTW - it's currently a python2 style statement while I'm bootstrapping the compiler!)

The arduino "blink" program for the leonardo profile:

led = 13 pinMode(led, OUTPUT) while True: digitalWrite(led, HIGH) delay(1000) digitalWrite(led, LOW) delay(1000)

This is unlikely to ever be a completely general python to C++ compiler, especially in the context of a desktop machine. If you're after that, there are better options (shedskin and Cython spring to mind).

Where to get it -- Ubuntu 14.04LTS (trusty), 15.04 (vivid)

(This is the recommended mechanism)

I build packages for this in my PPA. You can install pyxie as follows -- add my PPA to your ubuntu, update and install the python-pyxie package.

sudo add-apt-repository ppa:sparkslabs/packages sudo apt-get update sudo apt-get install python-pyxie

This will automatically pull in the dependencies - PLY and guild

Where to get it -- PyPI

Simplest approach using PyPI:

sudo pip install pyxie

Alternatively go to the Pyxie page and download from there...

... and then do the usual sudo python setup.py install dance.

Dependencies

Uses David Beazeley's PLY package. Either use your package manager, or install from pypi:

At some later point in time the batch compiler will make use of guild - my actor library. That can be found here:

Usage - Default Profile

Given the following python file : benchmark.pyxie

countdown = 2147483647

print "COUNTING DOWN"

while countdown:

countdown = countdown - 1

print "BLASTOFF"

You compile this as follows:

pyxie compile benchmark.pyxie

That compiles the file using the standard/default profile, and results in a binary (on linux at least) that can be run like this:

./benchmark

Usage - Arduino Profile

Given the following python file : arduino-blink.pyxie

led = 13 pinMode(led, OUTPUT) while True: digitalWrite(led, HIGH) delay(1000) digitalWrite(led, LOW) delay(1000)

You compile this as follows:

pyxie --profile arduino compile arduino-blink.pyxie

That compiles the file using the arduino leonardo profile, and results in a hexfile called this:

arduino-blink.hex

Depending on your actual leondaro type device you can use AVRdude to load the code onto the device. If you're using a bare Atmel 32U4, you can use dfu-programmer to load that hexfile onto your device.

Usage - Summary

More generally:

pyxie -- A little python compiler

Usage:

pyxie -- show runtime arguments

pyxie --test run-tests -- Run all tests

pyxie --test parse-tests -- Just run parse tests

pyxie --test compile-tests -- Just run compile tests

pyxie --test parse filename -- Parses a given test given a certain filename

pyxie parse filename -- parses the given filename, outputs result to console

pyxie analyse filename -- parses and analyse the given filename, outputs result to console

pyxie codegen filename -- parses, analyse and generate code for the given filename, outputs result to console. Does not attempt compiling

pyxie [--profile arduino] compile path/to/filename.suffix -- compiles the given file to path/to/filename

pyxie [--profile arduino] compile path/to/filename.suffix path/to/other/filename -- compiles the given file to the destination filename

Open Source

Pyxie is Copyright © 2015 Michael Sparks, and Licensed under the Apache 2 license. It's not public on github at the moment because the internals are still changing somewhat between releases. Once this has stablised I'll open up the git repo.

The code is however on github, so if you'd like early access, let me know. Obviously you can do what you like with the code from pypi within the reasonable limits of the apache 2 license!

It's developed entirely using my own kit/etc too so nothing to do with work. (I mention this primarily because during the Micro:bit project I also built a scrappy python to C++ compiler. That had all sorts of limitations, but made me think "If I was to redo this from scratch, I'd...". This project is a result of that thinking as a result, but as I say nothing to do with work!)

Release History

This is to give some idea of progress it's good (though obviously not swift) progress, with an average of a few weeks between releases. (Mainly because dev depends on spare time:)

- 0.0.1 - (rolled into 0.0.2 - Initial structure)

- 0.0.2 - supports basic assignment

- 0.0.3 - Ability to print & work with a small number of variables

- 0.0.4 - Mixed literals in print statements

- 0.0.5 - Core lexical analysis now matches language spec, including blocks

- 0.0.6 - Character Literals, "plus" expressions, build/test improvements

- 0.0.7 - Structural, testing improvements, infix operators expressions (+ - * / ) for integers, precdence fixes

- 0.0.8 - Internally switch over to using node objects for structure - resulting in better parsing of expressions with variables and better type inference.

- 0.0.9 - Grammar changed to be left, not right recursive. (Fixes precedence in un-bracketed expressions) Added standalone compilation mode - outputs binaries from python code.

- 0.0.10 - Analysis phase to make type inference work better. Lots of related changes. Implementation of expression statements.

- 0.0.11 - Function calls; inclusion of custom C++ headers; empty statements; language spec updates

- 0.0.12 - While loops, break/continue, Website, comparison operators, simple benchmark test

- 0.0.13 - if/elif/else,conditionals/boolean/parenthesised expressions.

- 0.0.14 - For loops implemented. Added clib code, C++ generator implementation, FOR loop style test harness, parsing and basic analysis of of FOR loops using a range interator

- 0.0.15 - clib converted to py clib for adding to build directory

- 0.0.16 - Adds initial Arduino LEONARDO support, improved function call, release build scripts

Supporting this

Please do! There's many ways you can help. Get in touch.

Summary

Pyxie is at an very early stage. It is a simple little python to C++ compiler is at a stage where it can start to be useful for some limited arduino style trinkets.

Kicking the tires, patches and feedback welcome.

Serving packages for multiple distribitions from a single PPA

August 03, 2015 at 02:21 PM | categories: pyxie, vivid, arduino, python, trusty, actors, ubuntu, iotoy, dfuprogrammer, kamaelia | View CommentsSo, I created a PPA for my home projects - either things I've written, or things I use. ( link to launchpad ) Anyway, I use Ubuntu 14.04LTS, primarily for stability, but making things available for more recent versions of Ubuntu tends to make sense.

To cut a long story short, the way you can make packages available for later distributions is to copy the packages into those distributions. Obviously that will work better for packages that are effectively source packages (pure python ones) rather than binary packages.

The process is almost trivial:

- Go to your PPA, specifically the detail for your packages.

- Hit copy and select the packages you want to copy into a later ubuntu distribution

- Select the distribution you wish to copy them to (eg Vivid for 15.04, etc)

- Then tell it to copy then over the packages.

And that's pretty much it.

As a result, you can now get the following packages for 14.04.02LTS and 15.04 from my PPA:

- pyxie - A "Little Python" to C++ compiler. Very much a WIP. Supports Arduino as a target platform. (WIP)

- arduino-iotoy - IOToy interfaces for running on arduino (STABLE)

- python-iotoy - Python library for working with IOToy devices - local and remote - and publishing on the network. (STABLE)

- guild - My new Actor library. (Pun: An Actor's Guild) (STABLE)

- guild.kamaelia - when "done" will contain a collection of components that were useful in Kamaelia, but suitable for use with Guild (WIP)

Older libraries there:

- axon - Kamaelia's core concurrency library (STABLE)

- kamaelia - Kamaelia - a collection of components that operate concurrently (STABLE)

- rfidtag - A wrapper around TikiTag/TouchaTag tags. (Has a binary element, unknown as to whether will work on 15.04 - let me know?!) (STABLE)

- waypoint - A tool for allowing opt-in tracking at events - for example to allow people to pick up a personalised programmer after the event. (STABLE)

Libraries/tools by others that I use:

- behave - Library I use for BDD

- dfu-programmer - Tool I use for flashing bare Atmel 32U4 chips. It does alot more though.

- parse - used by behave

- parse-type - used by behave

Found this from this helpful post on stack overflow http://askubuntu.com/questions/30145/ppa-packaging-having-versions-of-packages-for-multiple-distros

Hello Microbit

March 28, 2015 at 07:38 PM | categories: C, microbit, arduino, BBC R&D, blockly, work, BBC, C++, microcontrollers, python, full stack., full stack.D, webdevelopment, mbed, kidscoding | View CommentsSo, a fluffy tech-lite blog on this. I've written a more comprehensive one, but I have to save that for another time. Anyway, I can't let this go without posting something on my personal blog. For me, I'll be relatively brief. (Much of this a copy and paste from the official BBC pages, the rest however is personal opinion etc)

Please note though, this is my personal blog. It doesn't represent BBC opinion or anyone else's opinion.

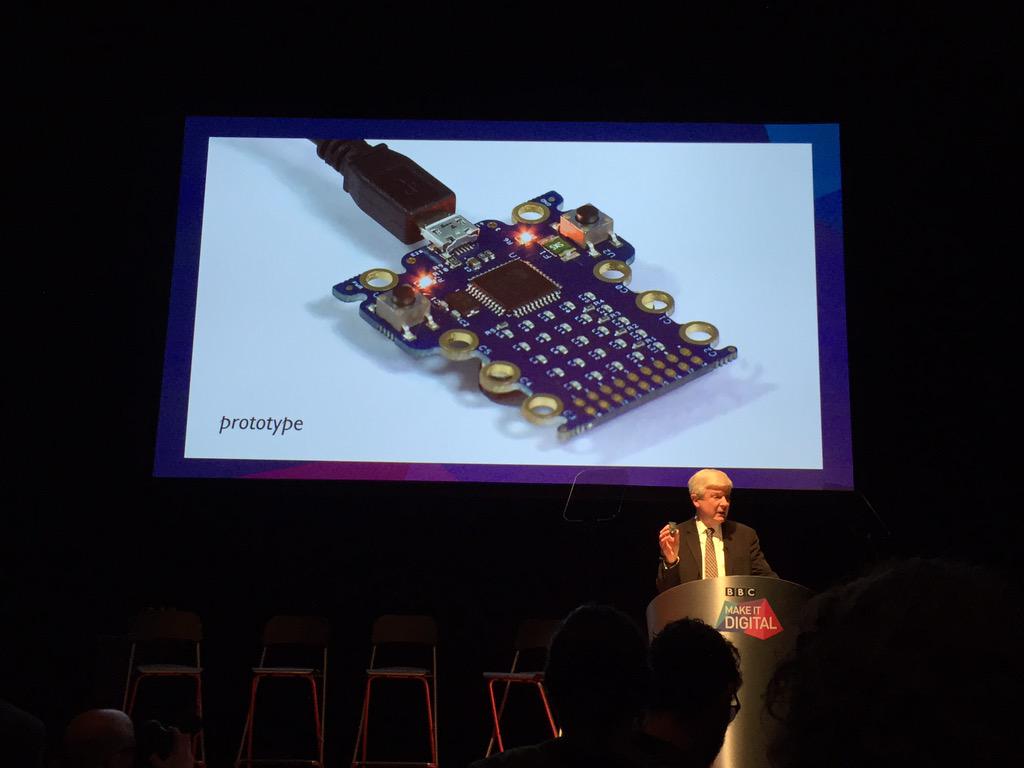

Just over 2 weeks ago, the BBC announced a small device currently nicknamed the BBC Micro Bit. At the launch event, they demonstrated a prototype device:

The official description of it is this:

BBC launches flagship UK-wide initiative to inspire a new generation with digital technology

The Micro Bit

A major BBC project, developed in pioneering partnership with over 25

organisations, will give a personal coding device free to every child in year 7 across the country - 1 million devices in total.

...

Still in development and nicknamed the Micro Bit,* it aims to give children an exciting and engaging introduction to coding, help them realise their early potential and, ultimately, put a new generation back in control of technology. It will be distributed nationwide from autumn 2015.... The Micro Bit will be a small, wearable device with an LED display that children can programme in a number of ways. It will be a standalone,

entry-level coding device that allows children to pick it up, plug it into a computer and start creating with it immediately.

...

the Micro Bit can even connect and communicate with these other devices, including Arduino, Galileo, Kano and Raspberry Pi, as well as other Micro Bits. This helps a child’s natural learning progression and gives them

even more ways of expressing their creativity.

...

Early feedback from teachers has shown that it encourages independent learning, gives pupils a strong sense of achievement, and can inspire those who are not usually interested in computers to be creative with it.

For me, the key points about the prototype:

- Targetted at children, and their support networks (teachers, parents etc). This is reflected throughout the system. It doesn't underestimate them though. (I work with cubs and scouts in my spare time, and I know they're much willing to try than adults - which means they can often achive more IMO. Especially at scout age)

- Small programmable fun device, instant feedback, with lots of expansion capability with a gentle gradual curve.

- Web based interfaces for coding, as well as offline interfaces

- Graphical, python or C++ code running on the device

- Designed to work well with other things and hook into larger eco-systems where there's lots of users.

(That's all public information, but for me those are really the key points)

Aside from the obvious -- why did the announcement excite me? Simple: While it wasn't my vision - it was Howard Baker's and Jo Claessen's, I implemented that Microbit prototype and system from scratch. While an earlier iteration had been built and used at events, I was asked to build a prototype we could test in schools and to take to partners to take to scale. (I couldn't work from that earlier iteration, but rather worked from the source - Howard and Jo. It was a harder route, but rewarding)

For those who recognise a similarity between this and Dresscode which I blogged about last summer, the similarities you see are co-incidental. (I was clearly on the same wavelength :-) ) While Microbit was in a early stage pre-prototype phase there, I hadn't seen it.

My thinking behind Dresscode though, was part of the reason I jumped at the chance to help out. It also mean though that some aspects I had a head start on though, and in particular, the IOToy and Guild toolsets I developed previously as part of some IOT work in R&D came in handy in building the prototype.(That and the fact I've used the arduino toolset a fair amount before)

Anyway, as I say, while I can't say much about the project, but it was probably the most intense development project I've worked on. Designing & building the hardware from scratch, through to small scale production manufacture in 3 months, concurrent with building the entire software stack to go with it - compilation toolchains, UIs and so on, AND concurrent with that was the development of detailed documentation and specs for partners to build upon. Intense.

In the last month of core development I was joined by 2 colleagues in R&D (Matt Brooks and Paul Golds) who took my spike implementations of web subsystems and fleshed them out and made them work the way Howard and Jo wanted, and the prototype hardware sanity checked and tweaked slightly by Lawrence Archard. There was still a month of development after that, so it was probably the most intense 4 months of development I've ever done. Well worth it though.

(Regarding names, project names change a lot. While I was building our device in even that short 3 months, it got renamed several times, and since! Don't read too much into names :-) Also, while it does fit anywhere else, it was Fiona Iglesias who was the project manager at the time who asked me if I could do this, and showed a great leap of faith and trust when I said "yes" :-) Balancing the agile process I needed to use against the BBC being very process driven (by necessity) was a thankless task!

Finally some closing things. On the day of the launch I posted a couple of photos in my twitter feed, which I'll repeat here.

The first was my way of going "yay". Note the smiley bit :-)

So, look what I made! http://t.co/OTCn14YN7s Earlier revs were built on my kitchen table :-) pic.twitter.com/2k9a2UBOZh

— Michael (@sparks_rd) March 12, 2015The second related to an earlier iteration:

Earlier iteration: pic.twitter.com/bmQ2G7KK2t

— Michael (@sparks_rd) March 12, 2015Anyway, exciting times.

What happens next? Dunno - I'm not one of the 25 partners :-)

The prototype is very careful about what technologies it uses under the hood, but then that's because I've got a long history of talking and writing about about why the BBC uses, improves and originates open source. I've not done so recently because I think that argument was won some time ago. The other reason is because actually leaving the door open to release as open source is part of my job spec.

What comes next depends on partners and BBC vagaries. Teach a child to code though, and they won't ever be constrained by a particular product's license, because they can build their own.

Anyway, the design does allow them to change the implementation to suit practicalities. I think Howard and Jo's vision however is in safe hands.

Once again, for anyone who missed it at the top though, this is a personal blog, from a personal viewpoint, and does not in anyway reflect BBC opinion (unless coincidentally :).

I just had to write something to go "Yay!" :-)

Ubuntu PPA Packages of sparkslabs projects

March 01, 2015 at 05:43 PM | categories: trusty, arduino, python, actors, ubuntu, iotoy, dfuprogrammer, kamaelia | View CommentsI've been meaning to do this for a long while now, but finally gotten around to it. I've set up an Ubuntu PPA for the respositories I have on github. The aim of this is to simplify sharing things with others.

My target distribution is Ubunty 14.04.1LTS

In order to install any of the packages under Ubuntu, you first add my new PPA, and update and install using apt-get.

ie Add my PPA to your machine:

sudo add-apt-repository ppa:sparkslabs/packages

Then do an update:

sudo apt-get update

You can the install any of things I've packaged up as follows:

- Guild:

sudo apt-get install guild - The arduino libraries for IOToy:

sudo apt-get install arduino-iotoy - Python IOToy libraries:

sudo apt-get install python-iotoy - dfu-programmer:

sudo apt-get install dfu-programmer - The nascent Kamaelia compatibility layer for Guild:

sudo apt-get install python-guild-kamaelia - Python RFID Tag Reader Module:

sudo apt-get install python-rfidtag - Waypoint (used the RFID tag reader to enable opt-in tracking round a venue):

sudo apt-get install python-waypoint - Axon:

sudo apt-get install python-axon - Kamaelia:

sudo apt-get install python-kamaelia

Mainly for my convenience, but will also make install docs for my projects simpler :-)

Installing Python Behave On Ubuntu 14.04.1LTS

February 16, 2015 at 12:26 PM | categories: python, bdd, behave, packaging, ubuntu | View CommentsBrief notes on how I installed Behave - a behaviour driven development package on Ubuntu 14.04.1LTS.

First off, why? Well, the common answer for installing things would be "use pip" or "use virtualenv". I tend to prefer to use debian packages when installing things under Ubuntu - primarily because then I only have one packaging system to worry about. This works fine for lots of things since lots of things have sufficiently recent packages. In the case of something like Behave though, this doesn't have any package, so we need to build one for that, and its dependencies.

Partly as a note to self, and for anyone else who may be interested, this is how I did it. First of all we need the sources of behave and its dependencies:

hg clone https://bitbucket.org/stoneleaf/enum34 git clone git@github.com:r1chardj0n3s/parse git clone git@github.com:jenisys/parse_type.git git clone git@github.com:behave/behave

To package these up we need some other things too:

sudo apt-get install python-stdeb sudo apt-get install devscripts

One of the dependencies is available as a package:

sudo apt-get install python-hamcrest

The just package up each dependency and install.

First of enum34:

cd enum34/ python setup.py sdist cd dist/ py2dsc enum34-1.0.4.tar.gz cd deb_dist/ cd enum34-1.0.4 debuild -uc -us cd .. sudo dpkg -i python-enum34_1.0.4-1_all.deb cd ../.. rm -rf dist cd ..

Then parse:

cd parse python setup.py sdist cd dist/ py2dsc parse-1.6.5.tar.gz cd deb_dist/ cd parse-1.6.5 debuild -uc -us cd .. sudo dpkg -i python-parse_1.6.5-1_all.deb cd ../.. rm -rf dist cd ..

Then parse_type:

cd parse_type/ python setup.py sdist cd dist/ py2dsc parse_type-0.3.5dev.tar.gz cd deb_dist/ cd parse-type-0.3.5dev debuild -uc -us cd .. sudo dpkg -i python-parse-type_0.3.5dev-1_all.deb cd ../.. rm -rf dist/ cd ..

And finally behave itself:

cd behave/ python setup.py sdist cd dist/ py2dsc behave-1.2.6.dev0.tar.gz cd deb_dist/ cd behave-1.2.6~dev0 debuild -uc -us cd .. sudo dpkg -i python-behave_1.2.6~dev0-1_all.deb cd ../.. rm -rf dist/ cd ..

Quick guide to packaging python libraries

February 09, 2015 at 06:22 PM | categories: python, packaging, ubuntu | View CommentsThe short and simple guide to installing python packages for Ubuntu:

- Install python-stdeb

- Build an sdist version of your package, make sure it's all good

- Run py2dsc on the library, debuild the resulting package

- Install

That's probably a little terse, but gets over how simple this can be if you can ensure that your package's sdist builds cleanly.

Short example of steps involved:

sudo apt-get install python-stdeb tar zxvf guild-0.1.0.tar.gz cd guild-0.1.0/ python setup.py sdist cd dist py2dsc guild-0.1.0.tar.gz cd deb_dist cd guild-0.1.0 debuild -uc -us cd .. sudo dpkg -i python-guild_0.1.0-1_all.deb

(This has been languishing in my drafts folder for a while, so I've shortened to post)

Why I'm watching the Commonwealth Games

July 24, 2014 at 10:42 PM | categories: people, python, IP Studio, javascript, bbc, C++, dayjob, CWG | View CommentsPeople don't talk enough about things that go well, and I think I'm guilty of that sometimes, so I'll talk about something nice. I spent today watching lots and lots the Commonwealth Games and also talking about the output too.

Not because I'm a sports person - far from it. But because the output itself - was the result of hard work by an amazing team at work paying off with results that on the surface look like ... "those are nice pictures".

The reality is so much more than that - much like they say a swan looks serene gliding across a lake ... while paddling madly. The pictures themselves - Ultra HD - or 4K are really neat - quite incredible - to look at. The video quality is pretty astounding, and given the data rates coming out of cameras it's amazing really that it's happening at all. By R&D standards the team working on this is quite large, in industry terms quite small - almost microscopic - which makes this no mean feat.

Consider for a moment the data rate involved at minimal raw data rate: 24 bits per pixel, 3840 x 2160 pixels per picture, 50 pictures per second. That's just shy of 10Gbit/s for raw data. We don't have just one such camera, but 4. The pixels themselves have to be encoded/decoded in real time, meaning 10Gbit/s in + whatever the encode rate - which for production purposes is around 1-1.2Gbit/s. So that's 45Gbit/s for capture. Then it's being stored as well, so that's 540Gbyte/hour - per camera - for the encoded versions - so 2TB per hour for all 4 cameras. (Or of that order if my numbers are wrong - ie an awful lot)

By itself that's an impressive feat really just to get that working in realtime, with hard realtime constraints.

However, we're not working with traditional broadcast kit - kit that's designed from the ground up with realtime constraints in mind and broadcast networks with realtime constraints in mind and systems with tight signalling for synchronisation. We're doing this with commodity computing kit over IP networks - albeit with ISP/carrier grade networking kit. The 4K cameras we use are connected to high end capture cards, and then the data is encoded using software encoders into something tractable (1.2Gbit/s data rate) to send around the network - to get the data into the IP world as quickly as possible.

This is distributed around the studio realtime core environment using multicast RTP. That means we can then have decoders, retransmitters, clean switchers, analysers, and so on all individually pick up the data they're interested in to do processing. This is in stark contrast to telling a video router matrix that you'd like some dedicated circuits from A to B - in that it's more flexible right out the box, and lower cost. The lower cost comes at the price of needing sufficient bandwidth.

At the edges of the realtime core we have stores that look for live audio/video/data and store them. We have services for transferring data (using DASH) from high speed storage on capture notes to lower speed cheaper storage.

It also means that production can happen whereever you extend your routing to for your studio. So for example, the audio mixing for the UHD work was not happening in Glasgow, but rather in London. The director/production gallery was in a different building from the commonwealth games themselves. The mix they produced was provided to another team for output over a test/experimental DVB-T output. Furthermore it goes to some purely IP based recievers in a some homes which are part of a test environment.

As well as this, each and every site that recieves the data feeds can (and did) produce their own local mixes. Since this IS an experimental system doing something quite hard we have naturally had some software, hardware and network related issues. However, when we ceased recieving the "mix" from their clean switch output from glasgow we were able to route round and pick up the feeds directly locally. Scaling that up rather than having a London feed and regional opt-outs during big TV events (telethons etc), each of the regions could take over their local broadcast, and pull in the best of all the other regions, including London. Whether they would, I don't know. But this approach means that they could.

While the data/video being shipped itself is remarkable, what is doing the processing, and shipping is in many respects even more remarkable - it's a completely rebuilt broadcast infrastructure from the ground up. It provides significantly increased flexibility, with reduced cost with "just" more upfront costs at development time.

If that wasn't enough, every single aspect of the system is controllable from any site. The reason for this is each box on the network is a node. Each node runs a bunch of "processors" which are connected via shared memory segments, forming arbitrary pipelines sharing raw binary data and JSON structure metadata. All these processors on each node are made available as RESTful resources allowing complete network configuration and control of the entire system. So that means everything from vision mixing/data routing, system configuration, and so on gets done inside a browser. System builds are automated, and debian packages built via continuous integration servers. The whole thing is built using a mix of C++, Python and Javascript, and the result?

"Pretty nice pictures"

And that is really, the aim. Personally, I think 4K is pretty stunning and if you want a look, you should. If you're in the BBC in Salford, come and have a look.

So why am I posting this though? I don't normally talk about the day job here - that should really normally be on the BBC blog or similar really. It's because I made a facebook post bigging up my colleagues at work in the context of this, so I'll finish on that.

Basically, I joined the IP Studio team (from one R&D team to another) 2 1/2 years ago. At that time many of the things I mention above were a pipe dream - and since that time I've touched many parts of the system, only to have them ripped out and replaced with newer better ways of doing things. Over that time frame I've learnt loads, worked with great people, and pretty humbled by everyone on the team for different reason.

If you ever feel "am I the only one to suffer from imposter syndrome", the answer is no. Indeed, I think it's a good thing - it means you're lucky to be working with great people. If you're ever upset at someone pointing at your code - which when you wrote it you were dead proud of - and saying "That's a hideous awful mess" then you're missing the point - if someone isn't saying that your codebase can't improve. After all I've yet to meet anyone who looks at old code of theirs and doesn't think it's a hideous awful mess. Most people are just too polite to mention it.

However, just for one moment, let's just consider the simple elegance of the system - every capture device publishing to the network. Every display device subscribes to the network. Everything is network controllable. The entire system is distributed to where is best for them to work, and built using largely commodity kit. The upshot? A completely reinvented studio infrastructure that is fit for and native to a purely IP based world, while still working for modern broadcast too. Why wouldn't I have imposter syndrome? The team is doing amazing things, and that really means every individual is doing amazing things.

And yes, THAT song from the Lego movie springs to mind to me too.

Normal cynicism will be resumed in a later post :-)

(Oh, and as usual, bear in mind that while I'm referring to work stuff, I'm obviously a) missing things out b) not speaking for the BBC c) simplifying some of the practical issues, and don't try and pretend this is in anyway official please. Now where's that asscovering close tag - ah here it is -> )

Connected Studio - Coding For Teens, Building the Dresscode

July 23, 2014 at 03:13 AM | categories: wearables, arduino, programming, iot, BBC, kidscoding | View CommentsAt a connected studio Build Studio last week, I along with 2 others in Team Dresscode prototyped tools for an proposition based around wearable tech. We worked through the issues of building a programmable garment, a programmable accessory, and a web app designed for teaching the basic of programming garments and accessories in a portable fashion.

We achieved most of what we set out to achieve, and the garment (as basic as it was) and accessory (again very basic) had a certain "I want one" effect of a number of people, with the web app lowering the barrier to producing behaviours. It was a very hectic couple of days, but as a result of the two days it's now MUCH clear as to how to make this attractive to real teenagers, rather than theoretical ones - which is a bonus.

Probably the most worthwhile thing I've done at work at the BBC in fact and definitely this year - beyond sending a recommendation to people in strategy last summer regarding coding.

The rest of this post covers the background, what we built, why we built it and an overview of how.

NOTE Just because I work in BBC R&D does NOT mean this idea is something the BBC will work on or take on. It does NOT mean that the BBC approves or disapproves of this idea. It just means that I work there, and this was an idea that's been pitched. Obviously I believe in the idea and would like it to go through, but I don't get to decide what I do at work, so we'll see what happens.

The only way I can guarantee it will go through is if I do it myself - so it's well worth noting that this work isn't endorsed by the BBC - I "just" work there

Connected What?

So, connected studio - what is that then?

Connected Studio is a new approach to delivering innovation across BBC Future Media. We're looking for digital agencies, technology start-ups and individual designers and developers (including BBC staff) who want to submit and develop ideas for innovative features and formats.

Note that this process is open to both BBC staff and external. It's a funded programme that seeks to find good ideas worth developing, and providing seed funding to test the ideas and potentially take forward to service. People can arrive in teams or form teams at the studio.

Connected Why?

And what was the focus for this connected studio?

- Inspiring young people to realise their creative potential through technology

- We want to inspire Britain's next generation of storytellers, problem solvers and entrepreneurs to get involved with technology and unlock the enormous creative potential it offers

- The challenge in this brief is to create an appealing digital experience with a coding component for teenagers aged 13-16

- Your challenge is to make sure we inspire not just teenagers in general, but teenage girls aged 13-16 in particular ** Ideally, we're looking for ideas that appeal to both boys and girls, however, we're particularly keen to see ideas that appeal to girls.

The early stages involve a idea development day - called a Creative Studio. At this stage people either arrive with a team and some ideas and work them up in more detail on the day, or arrive with an idea they have the core of and are looking for people to help them work up the idea, or people simply interested in finding an idea they think is worth working on.

That results in a number of pitches for potential services/events/etc the BBC could take forward. Out of these a number are taken forward to a Build Studio.

What happened on the way to the Build Studio?

So, that's the background. I signed up for the studio because I was interested in joining a team and interested in working with people outside our department - with a grounding either in BBC editorial and/or an external agency's viewpoint. I also had the core of an idea with me - which I'll explain first.

A meeting the previous week an attendee asked the question "If the stereotype of boys is that they're interested in sports, what's the stereotype for girls?". The answer raised by another person there was "fashion". Now, if you're a lady reading this who says "don't be so sexist, I'm not interested in fashion", please bear in mind I don't like sports - things like the commonwealth games, olympics, world cup, etc all leave me cold. Also please bear in mind the person asking the question was a lady herself, and the answer came from another lady at the meeting.

Anyway, true or false, that idea mulled with me over the weekend - with me wondering "what's the biggest possible draw I can think of regarding fashion?". Now the closest I get to fashion is watching Zoolander, so I'm hardly a world authority. Even I know about London Fashion week.

So, the idea I took with me to the creative studio was essentially wearable tech at london fashion week. I'd fleshed out the idea in my head more like this:

-

The BBC hosts an event at London Fashion week regarding wearable tech - with the theme of costumes for TV - which widens it beyond traditional fashion to props and so on. So you can have the light up suits and dresses but also props like wristbands, shoes, animatronic parrots, and so on. (Steampunk pirate?)

-

Anyone and everyone can attend, BUT the catch is they must have created a piece of wearable tech to take to the event - if they do however, they can take their friends with them, and one of them - either themselves or their friends can wear it down the catwalk.

To support this, you could have a handful of TV programmes and trails leading up to this, a web app for designing and simulating garments/etc of your choice, tutorials for how to build garments, and in particular allow transferable skills between the web app to creating programmable garments. So the TV drives you to the web, which drives you to building something, which drives you to an event, which drives you onto TV.

Now at the creative studio, there's essentially a "I've got this kind of idea" wall first thing in the morning - where if you've got an idea you can stand in front of those interested in joining a team and describe the idea- and then say what sort of skills/etc that you're after.

In my case I described the above idea, and said that I was primarily looking for people with an editorial or business oriented perspective. After all, while the above sounds good to me, I was never a teenage girl, and lets face it I was in the geeky non-cool demographic when I was a teenage boy :-) First person who joined me was Emily who has worked on various events at the BBC, primarily in non-techie areas including Radio 1's Big Weekend, Ben from a digital Agency and Tom from Nesta - a great mix of people. Thus formed Team Mix.

Team Mix's refined idea

We worked the idea through the day - with every element up for grabs and reshaping. The idea of being limited to London Fashion week was changed to be the wider set of possibilities - after all the BBC does lots of events from real physical ones like Radio 1 Big Weekend, through glastonbury, the proms, Bang goes the theory, through to event TV including things like The Voice.

After a session with a member of the target audience, we realised that while the idea was neat, for a teenager asking them to build something for a catwalk is actually asking an awful lot. Yes, while showing off, and finding your own identity is a big thing, not losing face, and not doing something to lower your status is a real risk. "I couldn't make that". As a result, we realised that this could be sidestepped by whatever the event was being changed to "this is a collection of your favourite stars wearing these programmable outfits and you get to decide what and how those clothes behave". However, on the upside this made the web element much clearer and better connected.

It left the accessories part slightly less connected, but still the obvious starting point for building wearable tech. This left the idea therefore as a 3 component piece:

-

Celebs with outfits that are programmable - a trend that seems to be happening anyway, and these would be worn at some interesting/cool event - to be decided in conjunction with a group running interesting/cool events :-)

-

A website for creating behvaiours via simple programming - allowing people to store and share their behaviours - the idea being that they control the outfits the celebs will wear.

-

Tutorials for building wearable tech accessories - which are designed to be programmed using the same behaviours as the celeb outfits - making the skills transferable.

So the idea was pitched at the creative studio - and ours got through to the Build Studio stage.

Taking wearable tech to the Build Studio

Out of the 20-30 ideas pitched, it was one of 9 to get through to the build studio. However, another team had ideas related primarily to Digital Wrist Bands, and our team - Team Mix - was asked to join with theirs - Team DWB - resulting the really non-sexy name Team Mix/DWB. That mean 8 teams at the studio. One of the teams didn't show, meaning 7 builds.

It transpired that out of the two teams, only myself and Emily could make it to the build studio - so we chatted to Ben and Tom about what they'd like to see achieved, and also to those on Team DWB. The core of their idea was complementary to our accessories idea so we decided to focus that part of our build on wristbands.

So, what was our ambition for the Build Studio? The ambition was pretty much to do a proof of concept across the board, and to describe the audience benefits in clearer, concrete terms. Again, there was the opportunity to bounce the ideas off a couple of teenagers.

We had help from the connected studio team in seeking assistance from other parts of FM, and after a call across all of BBC R&D, various groups in FM across the north and south we had a volunteer called Tom who was a work placement trainee.

I also ran our aspiration for the build studio via Paul Golds at work, and asked him if he wanted to be involved, and in particular what would be useful, and while he couldn't make it to the Build Studio, he did provide us we simple controllable web object.

What we built at the Build Studio

So the 3 of us set out to build the following over the 2 days of the build studio:

-

A wearable tech garment, which mirrors a web app, and in particular has a collection of programmable lights.

-

A web app for allowing a user to enter a simple program to control a representation of a web garment, with increasing levels of complexity. The app demonstrated implicit repetition, as well as sequence and selection.

-

A bracelet made of thermoplastic with programmable behaviours controlling lights on the device, perhaps incuding feedback/control using an LDR.

And that's precisely what we built.

The wearable garment was built as follows:

-

LEDs were sewn in strips - 8 at a time - onto felt. Conductive thread then looped through and tied on as positive and negative rails - like you would with a breadboard. Then attached underneath the garment so that the lights shone through.

-

The microcontroller for the garment was a Dagu Mini - which is a simple £10 device which is also REALLY simple to hook up to batteries and bluetooth. I've used this for building sumobots with/for cubs too. It's able to take a bit of bashing.

-

For each strip, the positive "rail" was connected to a different pin directly on the microcontroller - not necessarily the best solution, but works quite well for this controller.

-

Code for this was really simple/trivial - simple strobing of the strips. The real system would be more complex. In particular this is the sort of code in pastebin

However, the "real" garment would use something like this iotoy library for control - to allow it to be controlled by bluetooth and also by an IOT stack.

The web app is a simple client side affair only, and uses a small simple DSL for specifying behaviours. This is then transformed into a JSON data structure for evaluation. This uses jquery, bootstrap, and Paul's awesome little web controllable light up dress. You can see this prototype on my website.

Again, bear in mind that this is a very simple thing, and the result of a 1 - 1.5 day hack by a work placement trainee - treat it nicely :-) The fact it generates a JSON data structure internally which could be uploaded/shared is as much the point as the fact it has 3 micro-tutorials around sequencing, control and selection.

The bracelet was created as follows:

-

Thermoplastic was used at the wristband body - about 5-10g. This is plastic that melts in hot water (above 60 degrees), and becomes very malleable.

-

A number of LEDs and and LDR

- The LEDs connected to the 3 digital pins, A0 and A1, with A2 connected between an LDR and 10K resistor.

-

A DF Robot Beetle - which is a tiny (and cheap ~£4.50) Arduino Leonaro clone - though it's the size of a 10p piece, and only has (easy) access to 6 pins.

-

This was MUCH more fiddly than expected, and there's lots of ways of avoiding that issue. In the end the bracelet was electricly sound, though having access to a soldering iron (or rather a place where soldering could take place) would've been handy. We remarked several times that building the bracelet would've been easier at home multiple times - which I think is a positive statement really.

-

Again the cost part was very simple, and due to time constraints no behaviour of the LDR was implemented. On the upside, it does show how simple these things can be - http://pastebin.com/FzQqGSTv - again howvever, if the microcontroller was tweaked, you could use bluetooth and use something like the iotoy library above - allowing really rich interaction with other devices.

In fact we had a fair amount of this working before the meet the teenagers session. As a result we had concrete things to talk about with the teenagers, who warmed up when we point out the website and devices were the result of just a few hours work.

The feedback we had was really useful and great. This also meant that while physical and tech building continued on the second day, more time could be spent on the business case and audience benefit than might have otherwise - though never as much as you'd like.

Feedback we had was:

- Make it chunky!

- Can you make it velcro-able - so we can attach it to bags, clothing etc as well. ** It also made it more possible to add to things like belt buckles, jackets, hats etc.

- Can you have these devices controllable / communicating?

- Can you make the web app an Android/iOS app?

- Can you add in more sensors?

- Can it be repurposed?

- Call the project DressCode - this is one of those things that is a moment of genius, and so obvious and so appropriate in hindsight.

This instantly solved a problem we had in terms of keeping wristband small and elegant - chunky makes everything alot simpler. Velcro was a good idea, but a but late in our build studio to start over. The idea of controlling and communicating is right up the angle we wanted to pursue (IOT type activities using the iotoy library) but didn't have time for in a 1 - 1.5 day hack.

The idea of having a downloadable app was one that we hadn't considered, but fits right in with the system - after all this would be able to directly interact with a bluetooth wristband, and the communications stack is already written. Having more sensors was obvious, and repurposing made sense if we switched to something using velcro.

It was a really useful session as you can imagine.

The Pitch :: Dress Code

So we carried though to the pitch - DressCode - Fashion of the Tech Generation. (After all, a generation now will be learning how to code behaviours of some kind - so this is a fun application of the skills they'll be learning)

We said in the creative pitch what we'd allow you to do, and said we were allowing you to do just that - as you can see from what our core aspects were vs our prototypes.

Some key aspects of making this work would be the development of kit forms for tech bands which could then be made and sold by 3rd parties and partner - assuming they meet a certain spec.

The ideas behind the pitch included self-expression - the ability to look like the group or different from the group - the ability to share behaviours, to be part of something larger for the coding to be a means of creating behaviours for something but also that it leads naturally into coding for other devices - such as indicator bands or gloves for cyclists, shoes for runners, bottom up fund raising as happens with loom bands, and as a starting project for learning about coding - with a strong/fun inspiration piece involving TV - from headlining glastonbury through to a light up suit for Lenny Henry for comic relief - where each red nose is a seperate pixel to be controlled.

We described the user journey from the TV to the webapp to the techband and back to events and the TV show.

Pitching doesn't come naturally to me - the more outgoing you are in such situations the more likely your idea gains currency with others. Emily led our pitch and I though did a sterling job.

Competition however was very fierce. If our idea doesn't go through, it won't be because it's a bad pitch or a bad idea, it'll just because a different pitch is thought to be stronger/more appropriate for various reasons.

Hindsight

Hindsight is great. It's the stuff you think of after the time you needed it. In particular, one thing we were ask to do - and I think we kinda addressed it - at the build studio was to deal with the link between the techbands and the online web app and TV show. The way we dealt with it there was to decide to make available the same DSL to both wristbands (or blinkenbands as I nicknamed the code) and garments. ie to allow the same behaviour online or on a garment to control a blinkenband and so on.

That's pretty good, but there was a better, simpler solution staring us in the face all along :

-

The felt strips we sewed LEDs onto then wired up with conductive thread, were pretty simple to make. Even sewing in the Beetle would've been pretty simple.

-

Putting velcro onto those would've been simple - meaning each piece of felt could be a blinkenband. Also each blinkenband could be attached inside a garment (as each strip was), allowing at once both more complex behaviours but also a much cleaer link between the wristbands and the garments.

-

Also, attaching bands inside a pre-existing garment also drastically reduced the risk element for teenagers in building a garment - if it didn't look good, you still had wristbands. If it DID work, you gain more kudos. Furthermore, if you did this there's an incentive to make more than one wristband with two side effects - firstly it encourages more experimentation and play - the best way to learn but also leads to people potentially selling them to each other, secondly it encourages people to make more than one - since once they've done one, if they do more they could make an outfit that's all their own.

-

Also, if you did this, you have an activity that while a little pricey (about £10 each all in) it IS the sort of activity a Guides group would do - especially at camp (assuming a pre-programmed microcontroller ) in part because it's a group that tend to do more crafts type activities and would actually find the light useful in this situation. While people have ... "isn't that rather 19th century" ... views of things like Guides/Scouts, they do both cover the target demographic, and out of the two, it seems more likely to get Guides happily making felt/fabric programmable blinkenbands than Scouts - based on who the two sets attract.

For me, it's this final thought that made me think that this would definitely be a good starting point - since it seems a realistic way of connecting with the demographic. (Ironically - getting leaders on board in groups would perhaps be harder work!)

Closing thoughts

All in all, an interesting and useful couple of days, leaving me with some clear ideas of how to take things forward - with or without support from connected studio - which I think makes this a double win. Obviously getting 6-8 weeks to work on this for a pilot would be preferable to trying to cram it into my own time, but to me the Build Studio definitely proved the concept.

As mentioned an actual real world example of this would have to:

- Identify a realistic event

- Make the audience benefit clearer, figure out a marketing strategy

- Build a better/more concrete garment - I started unpicking my jacket, but didn't have time to pixelise it...

- Build a better web app - close the loop to controlling the garment, linking to users

- A downloadable app - which links to the online account for spreading behaviours to a device.

- Better tutorials, and kits for building tech bands.

All in all a great and productive couple of days.

Next up, building robots for the BBC academy to teach basics of coding to BBC Staff (though I suspect they'd like tech bands too :-), but that's another day.

Later edit

(27th August)

Well, this is a later edit, and a shame to mention this, but Dresscode did not make it through the Connected Studio to Pilot. That's the nature of competitive pitching though, which is both sad, and awesome because it means a better idea did go through.

Regarding Dresscode itself, the Connected Studio team also said the following:

With regards to continuing your work on this independently, the idea is your IP and as it did not get taken to pilot the BBC doesn't retain any IP on Dress Code. The only caveat is that the idea cannot be pitched at another Connected Studio event. However, the Connected Studio modus operandi does not replace BBC commissioning, so feel free to progress the idea this way. We must add that if choosing the BBC commissioning route, it's up to you to talk to your line manager about this before any steps are taken.

So while Dresscode as a Connected Studio thing is no more, it could be something I could do independently later. We'll see.

Recent interesting links from April

May 07, 2014 at 04:26 PM | categories: interesting, links | View CommentsIn a further post of the intermitted kind, again, a collection of things I thought interesting and worth blogging about. Some of them are things I would previously have tweeted as interesting links, others are here because if like come back them some point.

Fun things, Cool Stuff

- Ever wondered how engineers feel in a meeting with non engineers. If I ever say to you seven perpendicular lines you'll now know what I mean.

- Cake is one thing. Layer cake is another. Layer cake in the shape of a sphere? Now that's planetary cake

- BBC News article on Denmark being entirely modelled in Minecraft...

- Interesting hack for solar charging using PWM and an Arduino

- It's always nice to have some variety in typography.

- Cool tech - 3D printer for Carbon Fibre

- Ever wondered what do modern websites look like on ancient browsers? No? Well, now you can and look at the answer in one step.

Useful tool

- Work with Mercurial repositories using git

- If you edit things using markdown, you'll probably find dillinger.io a useful site to visit - a combined markdown editor/viewer.

Programming

- Some notes on lesser known programming models. Most of these should already be known to most developers, but it's an interesting link nonetheless - esp given the links to languages you may/may not have already seen before.

- How to use Go on android devices.

- Every so often people look at systems and say "that must be easy!" and severely underestimate the complexity of a piece of code - be it a game or website. For those who don't code understanding why making code based systems can be hard is also hard - but hopefully this site helps. This also goes hand in hand with effective estimation. That's hard for all the same reasons.

- Rust and Go are both getting alot of airtime at the moment, and so it's useful to see a comparison of both rust and go.

- Interesting post on how to improve programming in the future, and for those not building coding tools how they could/should in future work.

- I'd never heard of Naur's ideas around programming as theory building, and it looks like it makes a significant contribution to theories around software development. Catenary's blog post regarding Naur stands as a useful introduction in a modern context. The original paper by Naur appears to be online as well.

- Ever wanted to write python with braces? Take a look at pythonb. Note, while it fixes the syntax, the standard library hasn't been flipped so you can't just compile and run. Fun thought though.

- Depressingly accurate youtube video of programming developments and how it could have been today. Talks about lots of things we should be doing now, but by going back to the roots of when these things actually started - often 30-40 years ago. This talk goes hand in hand really with the one on better programming. 30 minutes, but worth it, especially if you don't know an awful lot about the history of software development...

- Useful resource for [learning CSS layout][CSSLAYOUT].

- Since PHP won't go away, more and more people are looking at optimising for it. One project called HippyVM maybe worth your time if you do much with the language.

- The relatively new combined C++ FAQ - not just C++11, but also C++14 related...

Wider issues in tech

- An interesting business oriented take on innovation. Technically innovation has a different meaning, but interesting opinon

- Interesting read on how copyright laws for digital content conflict with sharing that people are used to - specifically preventing people sharing books

- Sleep deprivation is a bad idea at the best of times, it also has a severe effect on businesses, with sleep deprivation driving the failure rate of tech startups .

- Ever looked at a tech conference and thought "I can do better" or "there's no way I can do that?", the WAAA website is trying to encourage more people to talk. If you're not happy with diversity at conferences - propose a talk. We mimic those we percieve as being like us. By doing it yourself, you encourage others to do so too.

- ISO's recommendation on How to write standards

- Interesting read - the cost of telling lies

- Some people tweet without thinking about what their tweets tell others about their location. Worth a read - there are a lot of nutjobs out there who abuse twitter in that way.

- The Amiga will never die. Well, of course it did, but that doesn't stop people bringing life back into the old girl from time to time. This latest amiga hack of a Raspberry Pi and floppy drive is particularly entertaining. Much like the Amiga was itself. Nothing since has really quite had the same immediacy when it comes to digital art.

- Bit of a marketing post on Kanban (it mentions a particular product), but also quite a good post at illustrating the difference/benefit of Kanban over Scrum - even though on some superficial levels they're very similar.

Politics/Technology, etc

- You know that a technology has reached peak hype when the government starts talking about it. In this case, The Internet of Things.

- Is Nuclear energy about to have a comeback? Article discusses Thorium reactors - which reminds me of some writing by Isaac Asimov years ago...

This week's interesting links

March 22, 2014 at 03:00 PM | categories: interesting, links | View CommentsWell, 2 weeks since the last post of this type, so this is a bumper edition. As before it's a collection of things I thought interesting and worth blogging about. Some of them are things I would previously have tweeted as interesting links, others are here because if like come back them some point.

This post also (naturally) includes links to things I've written since the last one these:

Some general reads

- Smart guy trap

- How to think

- A (long) but eloquent rant on Hacker Ethics (summary: patch or GTFO)

- Cunningham's Law

- More problems with Google's names policy

- Secrets of Branson's Success

- Talent Buddy - a sure for practicing your coding skills in a variety of languages

Programming languages don't stay still. There's a new breed out there at the moment, of which I think Rust and Julia are must interesting. Nimrod caught my attention for the comparison angle. Python, Ruby, JavaScript won't always hold the roost so there question is always 'where next?' Hack - from Facebook also looks interesting (and reminds me of Cython)

- Julia programming language

- Mozilla's Rust project

- The Wikipedia page onRust

- Rust book targeted at Ruby developers

- Rust main web site

- An attempt at building a simple Operating System using Rust

- Resources for building games with Rust

- Rust game wiki

- There's a fairly useful Rust sub reddit

- Rust tutorial

- Nimrod is another new language

- Hack is Facebook's new language which adds gradual static typing to PHP. Reminds me of Cython which does something similar for python. (which I've used off and inn for a few years now) Hack is significant I think because Facebook (who hardly have a small infrastructure) have ported most their previously PHP codebase to Hack - for performance and reliability reasons.

Some really interesting code resources for kids and those who teach them popped up...

- There's now a large selection of Code Club resources on github

- Programming minecraft on the Raspberry Pi (pdf)

- ... blog of the minecraft coding book

- Web Flow - Web systems without coding

Some generally interesting technical posts

- Node habits for happiness

- Stack overflow thread on how to test flask

- Promising library for testing flask based apps

- Simple computer vision

- Understanding Quarternions

- Fundamentals of programming languages (pdf)

- W3C work on device discovery

- dap

- A thread on these W3C efforts

- An implementation of the Hindley Milner type inference system in python

Some interesting issues relating to the brains of programmers, from which parts the brain they use when coding (not where some people might expect), how pressures from coding drive people CRAZY - due things like unrealistic expectations, and overwork, along with debunking the whole left brain/right brain nonsense:

- Programmer's are being driven crazy by their work, claims Business Insider

- An account of one programmer who was burnt out/driven crazy

- Many developers tend to under rate the utility of non technical workers in start-ups

- Scanning programmer's brains

- The Guardian had an interesting article on the left and right brain myth

Finally a neat site with a large collection of free (as in libre and gratis) fonts, searchable usage type:

- Font squirrel is a source of free fonts

Is there anything that's caught your eyes in the past two weeks that I've not mentioned here? If so I'd be really interested to hear about it!

« Previous Page -- Next Page »